Content Moderation | Vibepedia

Content moderation is the systematic process of identifying, reducing, or removing user-generated content that is irrelevant, obscene, illegal, harmful, or…

Contents

Overview

Content moderation is a critical component of online trust and safety frameworks, as seen in the efforts of companies like Apple and Amazon to maintain a safe and respectful online environment. The process involves identifying, reducing, or removing user-generated content that is irrelevant, obscene, illegal, harmful, or insulting, and may involve direct removal of problematic content or the application of warning labels to flagged material. This is particularly important for social media platforms like Instagram and TikTok, where user-generated content can spread quickly and have a significant impact on online discourse. Experts like Noam Chomsky and Julian Steward have weighed in on the importance of content moderation in maintaining a healthy online ecosystem.

🚫 Types of Content Moderation

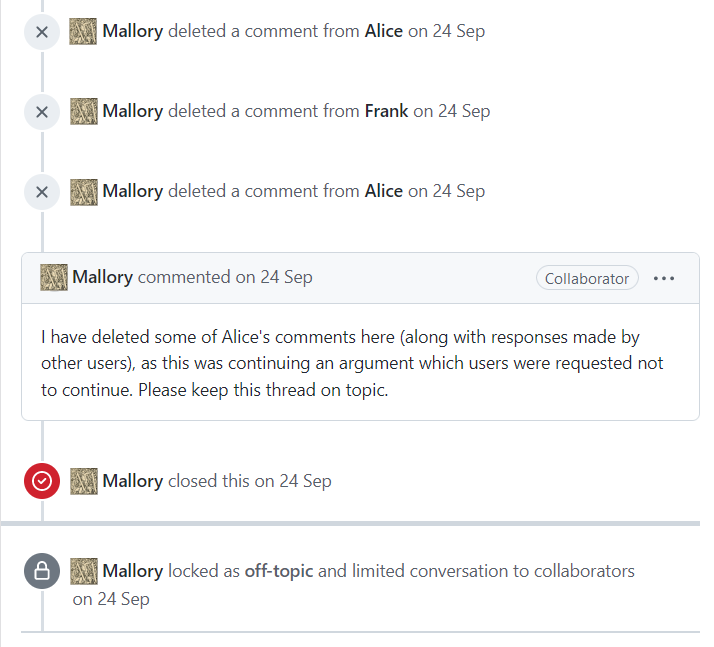

There are various types of content moderation, including pre-moderation, post-moderation, and reactive moderation. Pre-moderation involves reviewing user-generated content before it is published, while post-moderation involves reviewing content after it has been published. Reactive moderation involves responding to user reports of problematic content, and is often used in conjunction with AI-powered content moderation tools developed by companies like IBM and Oracle. Online forums like Stack Overflow and GitHub have also implemented content moderation policies to maintain a safe and respectful online environment. The EU's General Data Protection Regulation (GDPR) has also had a significant impact on content moderation policies, with companies like Facebook and Google facing fines for non-compliance.

🤖 AI-Powered Content Moderation

AI-powered content moderation is becoming increasingly popular, with companies like Google and Microsoft developing AI-powered tools to help streamline the content moderation process. These tools use machine learning algorithms to identify and flag problematic content, and can be particularly effective in identifying and removing hate speech and harassment. However, AI-powered content moderation is not without its challenges, and has been criticized for being overly broad and prone to errors. Experts like Elon Musk and Lex Fridman have weighed in on the potential benefits and drawbacks of AI-powered content moderation, and companies like Twitter and YouTube are working to develop more nuanced and effective AI-powered content moderation policies. The use of AI-powered content moderation tools has also been discussed in the context of the Digital Music Revolution and the impact of social media on society.

📊 Challenges and Controversies

Content moderation is not without its challenges and controversies, with many critics arguing that it can be overly broad and prone to errors. The use of AI-powered content moderation tools has been particularly controversial, with some arguing that it can be used to censor certain viewpoints or perspectives. Companies like Facebook and Twitter have faced criticism for their content moderation policies, with some arguing that they are too lenient and others arguing that they are too strict. The issue of content moderation has also been taken up by governments and regulatory bodies, with the EU's General Data Protection Regulation (GDPR) and the US's Section 230 of the Communications Decency Act providing a framework for content moderation policies. Experts like Steve Jobs and Bill Gates have also weighed in on the importance of finding a balance between free speech and online safety, and companies like Apple and Amazon are working to develop more effective and nuanced content moderation policies.

Key Facts

- Year

- 2010-2022

- Origin

- Global

- Category

- technology

- Type

- concept

Frequently Asked Questions

What is content moderation?

Content moderation is the systematic process of identifying, reducing, or removing user-generated content that is irrelevant, obscene, illegal, harmful, or insulting.

Why is content moderation important?

Content moderation is important for maintaining a safe and trustworthy online environment, and for protecting users from harmful or offensive content.

What are the different types of content moderation?

There are various types of content moderation, including pre-moderation, post-moderation, and reactive moderation.

How does AI-powered content moderation work?

AI-powered content moderation uses machine learning algorithms to identify and flag problematic content, and can be particularly effective in identifying and removing hate speech and harassment.

What are the challenges and controversies surrounding content moderation?

Content moderation is not without its challenges and controversies, with many critics arguing that it can be overly broad and prone to errors, and that it can be used to censor certain viewpoints or perspectives.