Community Moderation | Vibepedia

Community moderation refers to the process of managing and regulating online discussions, ensuring that they remain respectful, informative, and engaging…

Contents

Overview

Community moderation is a vital aspect of online community management, as it helps to maintain a positive and respectful environment for users. According to experts like Jonathan Zittrain, community moderation can be achieved through a combination of automated tools and human intervention. For instance, platforms like Stack Overflow and GitHub have implemented robust community moderation systems, which include features like upvoting, downvoting, and flagging. These systems allow users to contribute to the moderation process, while also providing a framework for human moderators to intervene when necessary. As noted by researchers like danah boyd, community moderation is essential for preventing online harassment and promoting digital literacy.

💻 Automated Moderation Tools

Automated moderation tools play a significant role in community moderation, as they enable platforms to efficiently manage large volumes of user-generated content. Tools like machine learning algorithms and natural language processing can help detect and filter out spam, hate speech, and other forms of abusive content. However, as noted by critics like Evgeny Morozov, over-reliance on automated tools can lead to false positives and negatives, highlighting the need for human oversight and intervention. Platforms like YouTube and Twitch have implemented AI-powered moderation tools, which are designed to work in conjunction with human moderators to ensure that community standards are upheld. As observed by experts like Ethan Zuckerman, the use of automated moderation tools can also help to identify and address issues related to online harassment and hate speech.

📚 Community Guidelines and Standards

Community guidelines and standards are essential for establishing a clear framework for community moderation. These guidelines outline the expected behavior and norms for users, providing a foundation for moderators to enforce and maintain. According to researchers like Whitney Phillips, community guidelines should be transparent, concise, and easily accessible to all users. Platforms like Wikipedia and Discord have developed comprehensive community guidelines, which are regularly updated and refined based on user feedback and community input. As noted by experts like Clay Shirky, community guidelines can help to foster a sense of ownership and responsibility among users, encouraging them to contribute to the moderation process and uphold community standards.

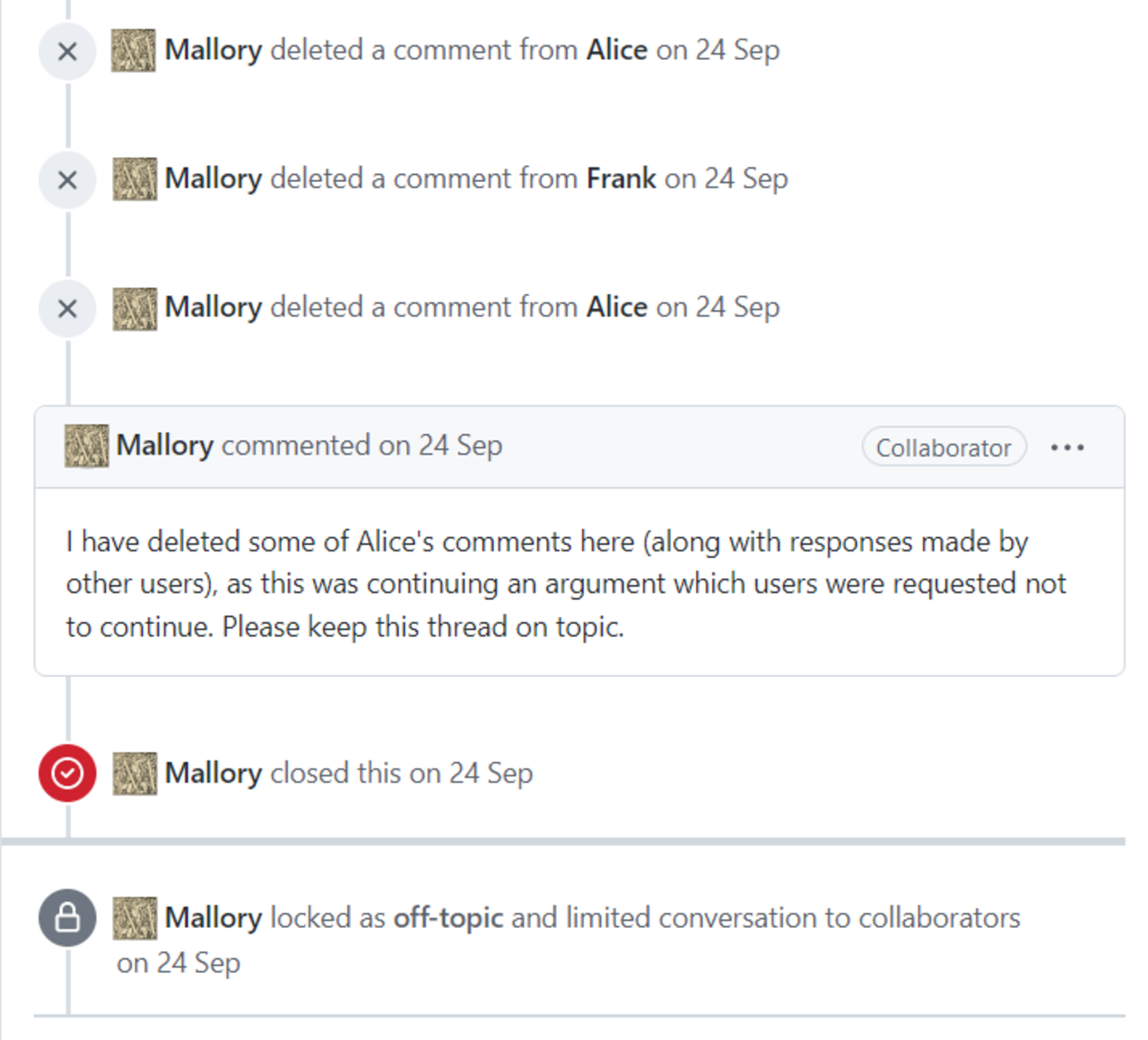

👮♀️ Human Moderation and Intervention

Human moderation and intervention are critical components of community moderation, as they provide a layer of oversight and accountability. Human moderators can review and address issues that automated tools may miss, ensuring that community standards are upheld and users are protected. According to experts like Sarah Kendzior, human moderators should be trained to handle sensitive and complex issues, such as online harassment and hate speech. Platforms like Facebook and Twitter have implemented human moderation teams, which work in conjunction with automated tools to ensure that community standards are enforced. As observed by researchers like Kate Miltner, human moderators can also help to identify and address issues related to community engagement and participation, promoting a more inclusive and respectful online environment.

Key Facts

- Year

- 2010

- Origin

- Online communities

- Category

- technology

- Type

- concept

Frequently Asked Questions

What is community moderation?

Community moderation refers to the process of managing and regulating online discussions, ensuring that they remain respectful, informative, and engaging.

What are automated moderation tools?

Automated moderation tools are software programs that use machine learning algorithms and natural language processing to detect and filter out spam, hate speech, and other forms of abusive content.

What is the role of human moderators in community moderation?

Human moderators play a critical role in community moderation, providing oversight and accountability, and addressing issues that automated tools may miss.

What are community guidelines and standards?

Community guidelines and standards outline the expected behavior and norms for users, providing a foundation for moderators to enforce and maintain.

Why is community moderation important?

Community moderation is important because it helps to maintain a positive and respectful environment for users, prevents online harassment, and promotes meaningful interactions among users.